While all proposed solutions make contributions to the neural representation of tabular data, there is still no systematic study to compare those representations given the different assumptions and target tasks.

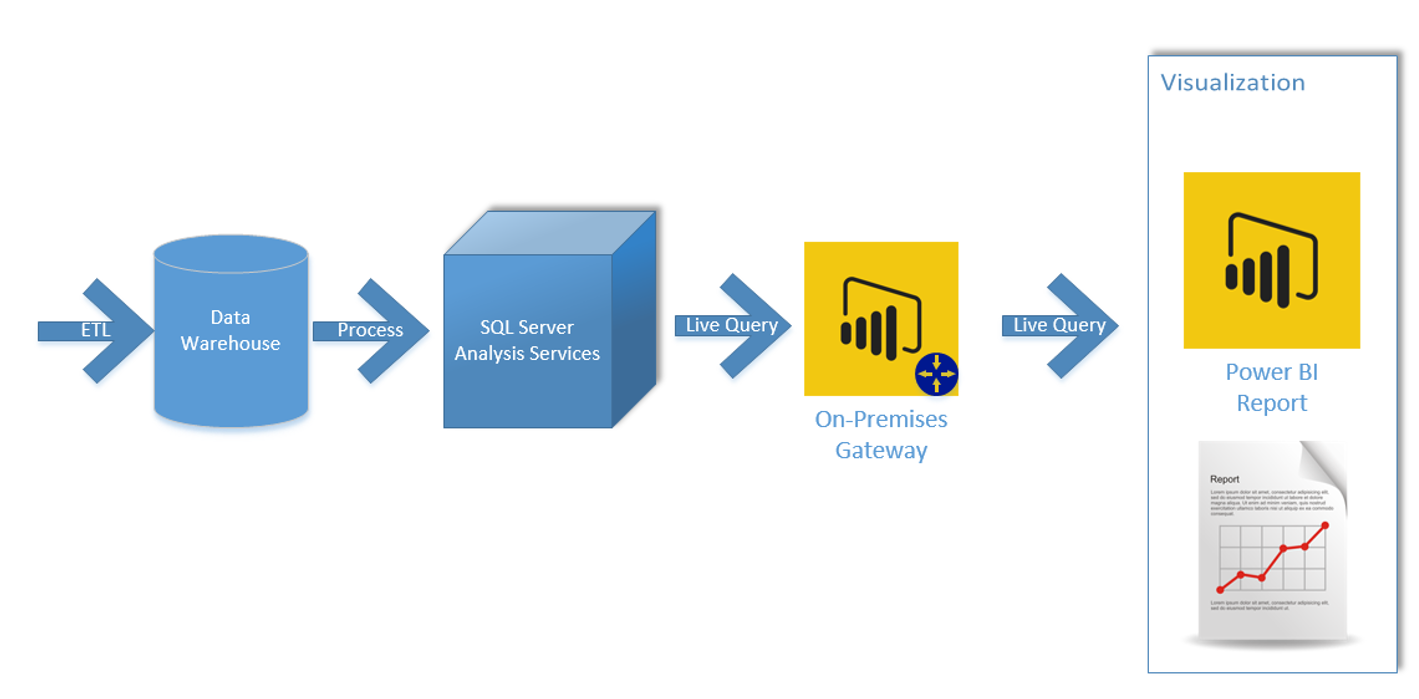

Recent work shows that a similar pre-training strategy leads to successful results when language models are developed for tabular data. Indeed, pre-trained LMs are very versatile, as demonstrated by the large number of tasks that practitioners solve by using these models with fine-tuning, such as improving Arabic opinion and emotion mining (Antoun et al., 2020 Badaro et al., 2014, 2018a, b, c, 2019, 2020). Such architectures, based on the attention mechanism, have proven to be successful as well on visual (Dosovitskiy et al., 2020 Khan et al., 2021), audio (Gong et al., 2021), and time series data (Cholakov and Kolev, 2021). Given the success of transformers in developing pre-trained language models (LMs) (Devlin et al., 2019 Liu et al., 2019), we focus our analysis on the extension of this architecture for producing representations of tabular data. Indeed, tabular data contain an extensive amount of knowledge, necessary in a multitude of tasks, such as business (Chabot et al., 2021) and medical operations (Raghupathi and Raghupathi, 2014 Dash et al., 2019), hence, the importance of developing table representations. Examples of tasks include answering queries expressed in natural language (Katsogiannis-Meimarakis and Koutrika, 2021 Herzig et al., 2020 Liu et al., 2021a), performing fact-checking (Chen et al., 2020b Yang and Zhu, 2021 Aly et al., 2021), doing semantic parsing (Yin et al., 2020 Yu et al., 2021), retrieving relevant tables (Pan et al., 2021 Kostić et al., 2021 Glass et al., 2021), understanding tables (Suhara et al., 2022 Du et al., 2021), and predicting table content (Deng et al., 2020 Iida et al., 2021). These models enable effective data-driven systems that go beyond the limits of traditional declarative specifications built around first order logic and SQL. Many researchers are studying how to represent tabular data with neural models for traditional and new natural language processing (NLP) and data management tasks. We also compare the best DL models with Gradient Boosted Decision Trees and conclude that there is still no universally superior solution. Both models are compared to many existing architectures on a diverse set of tasks under the same training and tuning protocols. The second model is our simple adaptation of the Transformer architecture for tabular data, which outperforms other solutions on most tasks.

The first one is a ResNet-like architecture which turns out to be a strong baseline that is often missing in prior works. Additionally, the field still lacks effective baselines, that is, the easy-to-use models that provide competitive performance across different problems.In this work, we perform an overview of the main families of DL architectures for tabular data and raise the bar of baselines in tabular DL by identifying two simple and powerful deep architectures.

As a result, it is unclear for both researchers and practitioners what models perform best. However, the proposed models are usually not properly compared to each other and existing works often use different benchmarks and experiment protocols. The existing literature on deep learning for tabular data proposes a wide range of novel architectures and reports competitive results on various datasets. Yury Gorishniy, Ivan Rubachev, Valentin Khrulkov, Artem Babenko Abstract Bibtex Paper Reviews And Public Comment » Supplemental

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed